Summary

UX Dark patterns (also known as deceptive patterns) in the digital world are design strategies that manipulate user behaviour and desires to encourage users to interact with the system in ways that prioritise business interests over the user's best interests. Deceptive patterns lead to digital excess usage and addiction (e.g. in online shopping, games and social media).

Introduction

Design is, by nature, a persuasive and behavioural practice that aims to achieve a desired change. In my previous article, Why Design Thinking Doesn’t Work, you will find the design process emphasising user-centrism and the focus on solving user experience problems through understanding user behaviour. For many decades, UX designers and HCI experts have used psychological theories to improve the user experience and enable users to complete tasks more efficiently (e.g., in less time and with fewer distractions).

That being said, not all the intentions are good. This power to control user behaviour has shown business benefits, such as increased user retention, time spent on platforms, and unnecessary purchases. These design patterns or strategies are called dark patterns or deceptive patterns. While many articles discussed dark patterns from a consumer perspective, I want to focus this article on social media excess usage and addiction and the behaviour theories underpinning the dark patterns in this context. You will find me using the terms excess usage and addiction interchangeably due to the close relationship between both behaviours.

It is worth mentioning that dark patterns are evolving. Feel free to share any examples and thoughts on dark patterns in the comments.

What are dark patterns (deceptive patterns)?

Dark patterns in UX refer to design strategies that aim to trick users into unconsciously taking actions that prioritise the company's interests over the user's. We see deceptive patterns around us every single day as they exploit cognitive biases, emotional triggers, and behavioural tendencies to influence actions such as spending more time on a platform, sharing personal data, subscribing to services, or making purchases.

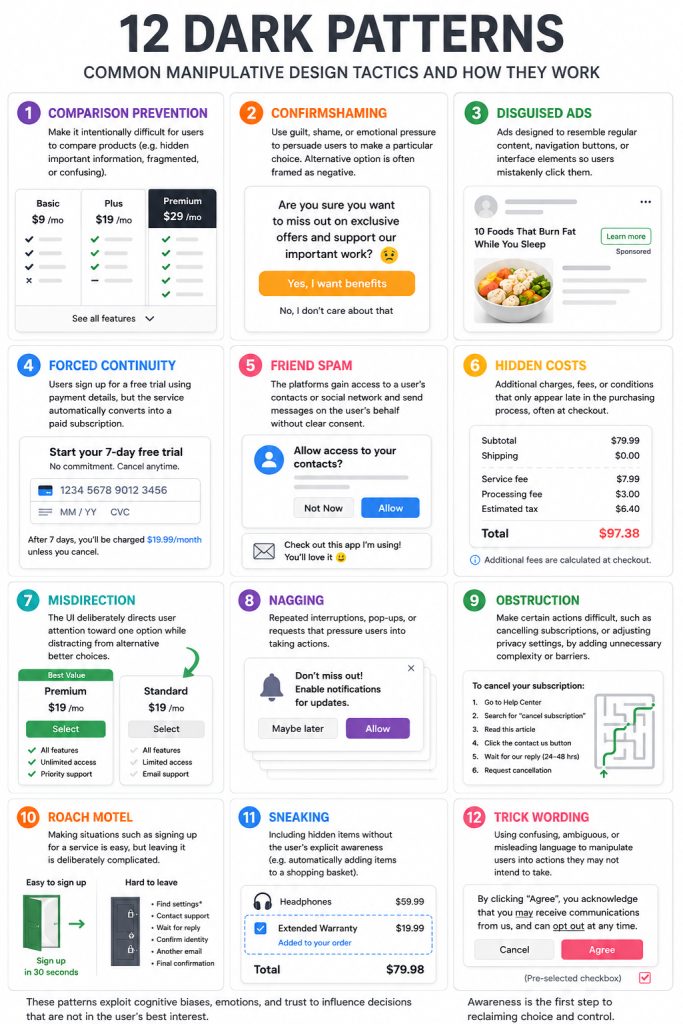

The term was coined in 2010 by Harry Brignull, the author of Deceptive Patterns, who launched the Deceptive Design Hall of Shame, an archive for dark pattern case studies from around the internet. Generally, Brignull defined 12 types of dark patterns:

- Comparison Prevention: Intentionally make it difficult for users to compare products (e.g., by hiding important information, fragmenting the experience, or making it confusing).

- Confirmshaming: Use guilt, shame, or emotional pressure to persuade users to make a particular choice while the alternative is often framed negatively.

- Disguised Ads: Ads designed to resemble regular content, natural language, navigation buttons, or interface elements so users mistakenly click them.

- Forced Continuity: Users sign up for a free trial using their payment details, but the service automatically converts the trial to a paid subscription.

- Friend Spam: Platforms access a user’s contacts or social network friends and send messages on the user’s behalf without clear consent.

- Hidden Costs: Additional charges, fees, or conditions that only appear late in the purchasing process, often at checkout.

- Misdirection: the UI deliberately directs user attention toward one option while distracting from alternative, better choices.

- Nagging: Repeated interruptions, pop-ups, or requests that pressure users into taking actions (e.g. enabling notifications, accepting cookies, or subscribing).

- Obstruction: Make certain actions difficult, such as cancelling subscriptions or adjusting privacy settings, by adding unnecessary complexity or barriers.

- Roach Motel: Making it easy to sign up for a service, but deliberately complicated to leave it.

- Sneaking: Including hidden items without the user’s explicit awareness (e.g. automatically adding items to a shopping basket).

- Trick Wording: Using confusing, ambiguous, or misleading language to manipulate users into actions they may not intend to take.

Dark patterns in UX are not limited to the above types, as companies continue to trial different tactics to increase their revenues over the cost of user benefits.

Dark patterns evolved from a UX process that focuses on a narrow range of metrics and ignores the holistic user experience, whether intentional or unintentional. For example, companies use UX research methods to improve platform metrics such as user count, time spent on the platform, ad clicks, and views. Tools like A/B testing enable UX designers to evaluate different UI designs and decide which performs better based on the metrics they measure. Other companies don’t bother doing the research at all and just copycat other platforms’ tactics that have proven successful.

UX Dark Patterns and Their Role in Social Media Addiction

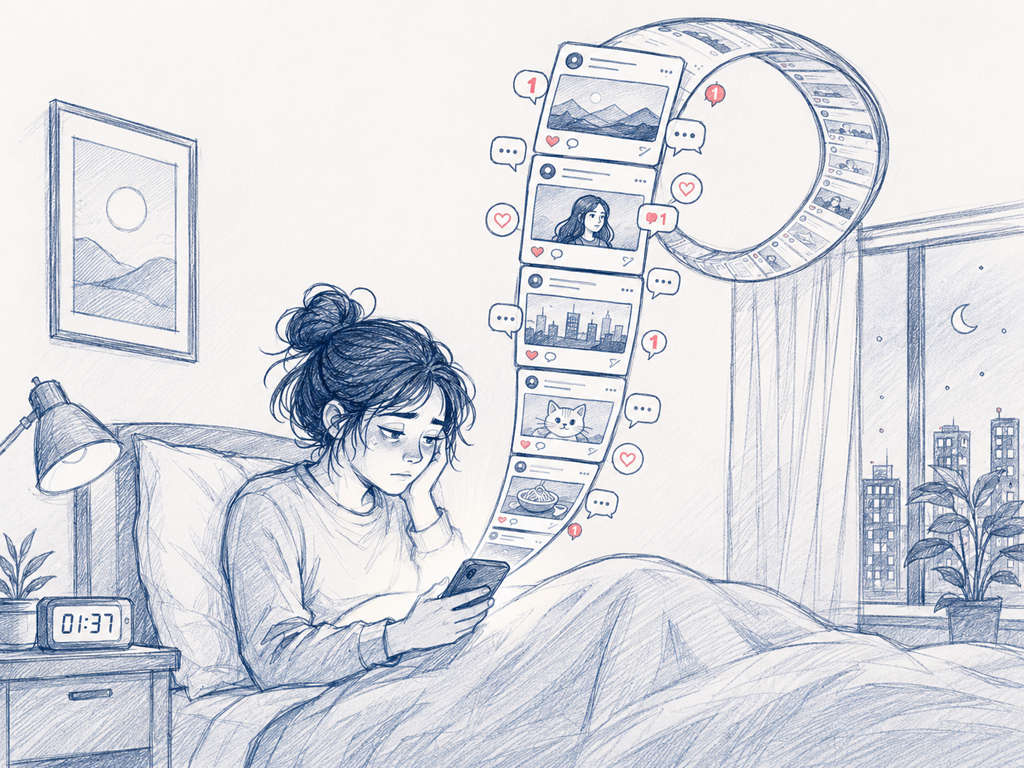

A few decades ago, Social media started as a platform for connecting with friends, following interests, and staying informed about the news. Over time, social media seems to do more harm than good, especially among young users, as addictive algorithms and company tactics aim to keep users attached to the platforms and disconnected from the real world. Several studies have shown the negative impact of social media and its link to mental health problems. While these symptoms are more common among young users, older users are also susceptible. Jean Twenge & Campbell’s, and Minghui Liu et al.'s studies have shown that, after 1 hour of daily social media use, psychological well-being began to decline.

Generally, studies have shown that using social media for more than 2.5 hours/day is associated with several mental and cognitive issues, such as:

- Depression, especially after using the platforms

- Anxiety occurs when users compare themselves with friends’ updates and achievements.

- Emotional instability is driven by the different emotional reactions to frequently updated feed content.

- Self-control and attention are affected when users compare themselves to others and are influenced by others’ interactions.

- Fear of Missing Out (FOMO) is driven by time-limited, frequently updated content.

- Short memory span, attention and cognitive ability, especially when consuming short videos.

Furthermore, in adolescents, the adverse impact is magnified with the impulsive reaction and unstable personality, causing further addiction behaviour, self-harm, and suicidal tendency.

Over the past two decades, several studies have confirmed the negative impact of social media, which is closely linked to the dark patterns and algorithms used by social media companies. So, the question is whether companies’ behaviour is purely coincidental?

Examples of UX Dark Patterns

Social media algorithms aim to use strategies to continuously keep users engaged with the platform through in-platform tactics such as showing content of interest or highlighting friends’ activities. off-platform strategies include email updates, lock-screen notifications, and red alerts on mobile app icons. According to the habit formation theory by Lally and Gardner, these strategies are called cues or triggers that automatically drive users to open the app (to escape boredom, stress or just curiosity). Then, the user engages with the content and gets rewarded with interesting content; the brain is hooked by the habit and repeats it automatically the next time it feels similar triggers.

But how do UX dark patterns force this loop, leading to adverse psychological effects highlighted above? Here are some examples:

Infinite scroll (doomscrolling)

One of the first UX design principles I teach my students is to reduce the number of steps (clicks) required to achieve their goals. But social media platforms took the tactic even further by eliminating pagination and the next button, eliminating the user's cognitive decision: “Do I want to continue?” A good example here is the continuous feed on TikTok and Facebook.

The psychology behind the infinite scroll's automaticity, variable rewards, and the absence of closure aligns with the Fogg Behavioural model. There is a correlation among ability, motivation, and prompt at the same time. The motivation is positively correlated with the user's ability. To achieve a successful prompt, increase the ease of the task while proportionally increasing the motivation. On social media, infinite scroll requires absolutely minimal effort from the user when the desire to see new content is high, leading to doom-scrolling, and the use of social media becomes an automatic behaviour done without thinking.

In the European Commission’s 2026 preliminary finding against TikTok, infinite scroll, autoplay, push notifications, and personalised recommendations are defined as addictive design features that may fuel compulsive use.

Push notifications and red badges

One of the most commonly used strategies in the digital world is notifications, which come in different types such as lock-screen alerts, vague messages, and in-app notifications. Notification functions can also be accompanied by sensory sounds or vibration. Social media platforms use notifications to drive users to open the platform through vaguely crafted messages. For example, LinkedIn users used to get emails about friends' messages. But the users ignored unimportant messages. So, the platform moved to show the notification without showing the message content to increase the open rate. With increasing uncertainty, users were prompted to open the platform to view the messages. Another example is icon notifications’ badge shows as many alerts as possible, regardless of their importance to the user.

In colour psychology, red is associated with warning, error, and urgency. It is used to attract user attention when there is a danger or error. Ironically, in the digital world, it has become a default colour for alerts, regardless of the negative impact of a continuous state of alert that users face when they open their phones to a storm of notifications.

This dark pattern is underpinned by the nudge theory (coined by Thaler and Sunstein), which defines an alert as an aspect of “choice architecture” that changes behaviour while remaining easy to avoid. In contrast to the original theory, notifications are usually set as the default, and social media platforms make it complicated to switch them off (also aligns with the Roach Motel dark pattern).

Social proof metrics

Social media platforms are built on social proofing metrics (e.g. likes, views, shares, and follower count). The algorithm quantifies user experience, so content with higher metrics is considered more important than others, even if it contains less valuable or even false information. This strategy negatively affects users' perceptions of what is important to them and to the community around them, resulting in negative psychological consequences on users’ mental health, especially at a young age.

Social proofing is underpinned by three main theories:

- Social comparison: In his paper, A Theory of Social Comparison Processes, Leon Festinger stated that when objective measures are unavailable, people tend to compare themselves to others. This applies when users view each other's posts on social media.

- Normative influence: Based on Morton Deutsch and Harold B. Gerard’s paper, A Study of Normative and Informational Social Influences upon Individual Judgment, people conform because they want approval, acceptance, or belonging, and because they want to avoid disapproval or social rejection. So, users tend to follow different trends on social media to align with their views and styles, isolating them from others in the diverse world.

- Reward learning: In Skinner’s theory of operant conditioning, the behaviour is shaped by reinforcement and punishment. So, metrics such as likes and comments present a quick, rewarding mechanism that reinforces users’ decision to keep posting. The eagerness to see rewarding results drives users to keep refreshing and visiting the platform to see how followers’ interactions unfold.

Recommendation loops

In the early days of Facebook, the newsfeed was presented chronologically, but later changed to an adaptive feed structure where the timeline is personalised based on predicted engagement, watch time, clicks, and reactions. Social media platforms such as YouTube and TikTok present the feed as “For you” or “Recommended for You.” The European Commission has found TikTok’s highly personalised recommendation system to be addictive.

The theory behind this mechanism is Peter C. Wason's confirmation bias, as described in his paper "On the Failure to Eliminate Hypotheses in a Conceptual Task." It states that people look for evidence to confirm their rule or choice rather than trying to falsify it. Social media feeds repeatedly show users content similar to what they previously liked or viewed. This UX dark pattern played an essential role in social media, in opposition to its original aim, driving users toward isolation as they saw only similar people and content rather than expanding their knowledge and networks, which aligns with the normative influence theory.

Ephemeral content and scarcity loops

Live streams, stories, and limited-time content urge users to engage immediately. For example, live streams receive more interactions in a short time compared with normal feed posts. This content triggers FOMO, as users rush to engage with it before it disappears. As studies compared the impact of different types of social media content on users’ psychological well-being, users who consumed this type of content showed a higher tendency toward social media addiction.

The concept of temporal discounting refers to the tendency to value rewards and benefits less when they are delayed. It is based on Paul Samuelson’s discounted utility model. To increase the value of the reward, the platform limits access to it, similar to strategies used in games. On social media, immediate rewards can be a short video, a recommendation, or a story that disappears from the feed after a specific time.

The above mechanisms are examples of the strategies that social media companies use to keep users engaged and hooked to the system. There are several other strategies, such as the privacy maze, making it hard to find options to adjust privacy, guilt framing, and disguised advertising.

How can Designers Avoid UX Dark Patterns?

Many of the above adversarial UX dark patterns can be intentional or unintentional, as companies continue to use UX research methods to adjust UX design on platforms to achieve better metrics, unaware of their connection or impact on user mental health.

This is mainly caused by the focus on the algorithm and the assumption that metrics reflect a positive long-term user experience. Therefore, designers should return to the original mindset of the design thinking process:

Consider the long-term user experience rather than the momentary experience reflected by the platform’s metrics. A systems thinking approach helps understand the impact of different strategies on the user's wider experience and the potential negative impacts in the future. Tools like the Six Thinking Hats can be easy to use for critically evaluating the impact of different design functions.

Return to the main aim of the design thinking process and how it puts the user at the centre of the process through participatory co-design, where the users investigate the wider impact of different UX features with awareness of the potential risks and opportunities.

Responsible designers understand the ethical, psychological and sustainable impact of technology. They don’t apply or use technology blindly, without a clear understanding of the different consequences of implementing technology.

Conclusion

Designers have the responsibility to focus on user needs and benefits, and to prioritise them over business needs. Therefore, designers have a crucial role in avoiding UX dark patterns and their adverse impact on users’ psychological well-being, promoting beneficial technology use and eliminating harmful strategies that will eventually affect both users and the system negatively.

Bibliography

Brignull, H. (2011). Dark patterns: Deception vs. honesty in UI design. Interaction Design, Usability, 338, 2-4.

Chen, W., Chan, T. W., Wong, L. H., Looi, C. K., Liao, C. C., Cheng, H. N., ... & Pi, Z. (2020). IDC theory: habit and the habit loop. Research and Practice in Technology Enhanced Learning, 15(1), 10.

Deutsch, M., & Gerard, H. B. (1955). A study of normative and informational social influences upon individual judgment. The journal of abnormal and social psychology, 51(3), 629.

Festinger, L. (1954). A theory of social comparison processes. Human relations, 7(2), 117-140.

Fogg, B. J. (2019). Fogg behavior model. Behav. Des. Lab., Stanford Univ., Stanford, CA, USA, Tech. Rep.

Frederick, S., Loewenstein, G., & O’donoghue, T. (2002). Time discounting and time preference: A critical review. Journal of economic literature, 40(2), 351-401.

Lally, P., & Gardner, B. (2013). Promoting habit formation. Health psychology review, 7(sup1), S137-S158.

Liu, M., Kamper-DeMarco, K. E., Zhang, J., Xiao, J., Dong, D., & Xue, P. (2022). Time spent on social media and risk of depression in adolescents: A dose–response meta-analysis. International journal of environmental research and public health, 19(9), 5164.

Thaler, R. H. (2018). Nudge, not sludge. Science, 361(6401), 431-431.

Tørmoen, A. J., Myhre, M. Ø., Kildahl, A. T., Walby, F. A., & Rossow, I. (2023). A nationwide study on time spent on social media and self-harm among adolescents. Scientific reports, 13(1), 19111.

Twenge, J. M., & Campbell, W. K. (2018). Associations between screen time and lower psychological well-being among children and adolescents: Evidence from a population-based study. Preventive medicine reports, 12, 271-283.

Wason, P. C. (1960). On the failure to eliminate hypotheses in a conceptual task. Quarterly journal of experimental psychology, 12(3), 129-140.

Leave a Reply